@claudio.gebbia , I thought about this some more, and I think there is an even simpler solution: Using a linked records inside a master record, and publishing only the master record on a schedule. I think that would be simpler than any of the above options?

Example:

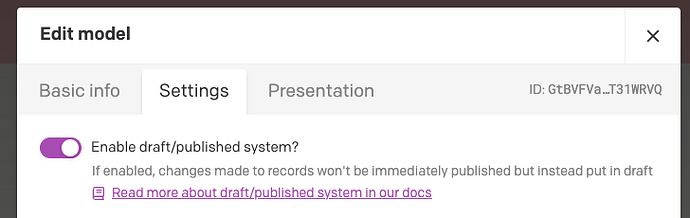

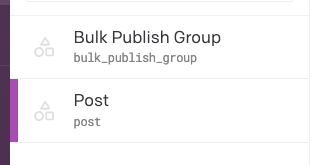

I have two models, both with drafts enabled:

posts are what I want to schedule publish in bulk. But I don’t actually want to set it on each one.

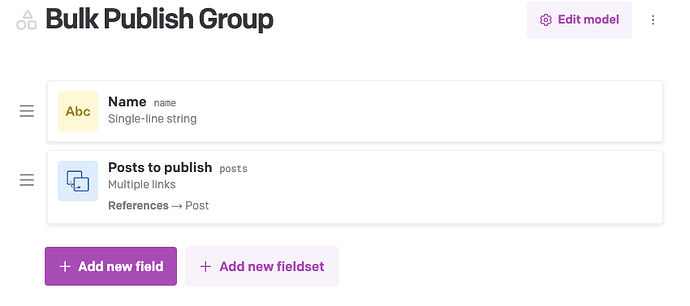

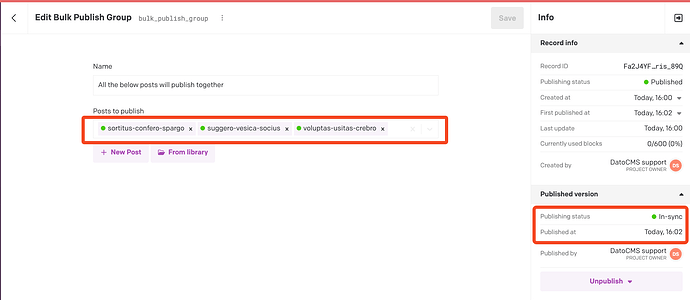

Instead, I create a separate model for this purpose. Let’s call it bulk_publishing_group:

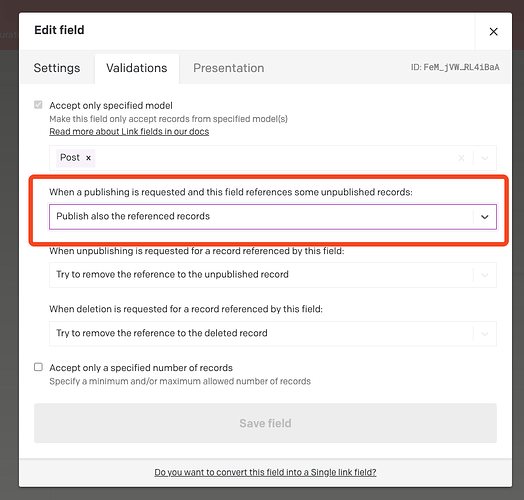

Its schema is very simple. In my example I added a title field for clarity, but really it only needs one field, a “Multiple links” field with its validations set to “Publish also the referenced records”:

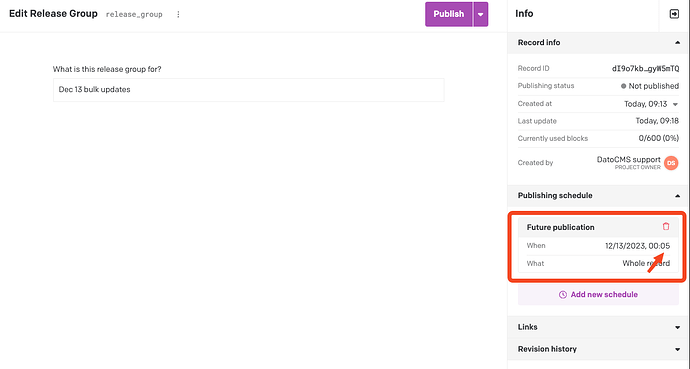

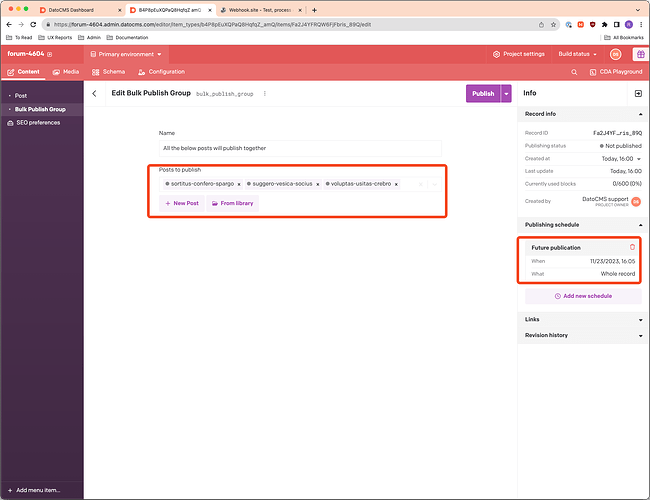

Then, I create a new record of bulk_publish_group and just link to all the posts I actually want to schedule. Save that record and then set a scheduled publish date ONLY for the bulk_publish_group, not the individual posts:

Because of the validation we previously set, once the bulk_publish_group record publishes, it will automatically publish all the other ones too:

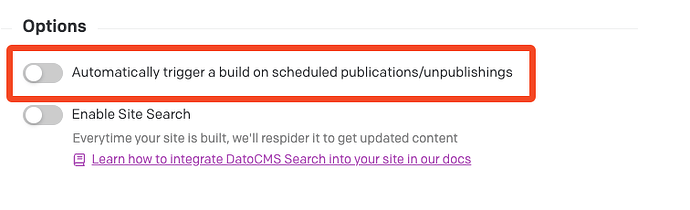

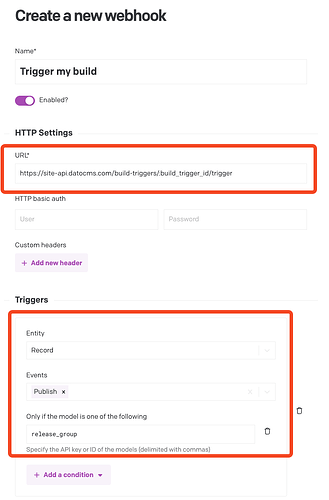

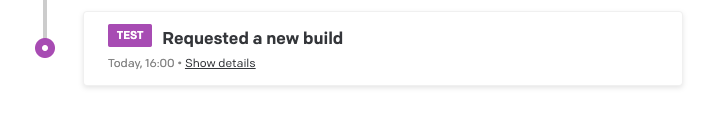

But because there was actually only one scheduled publish (on the bulk_publish_group, the build trigger only fires once:

Does that work for you? It should be simpler and cleaner than the above options.